Last Updated on 2022-10-21 by Joop Beris

We live in the Age of Information. Never before in the history of human civilization have so many people had access to such a wealth of information. For many of us, knowledge is quite literally at our fingertips. I used to believe that if only everyone had access to information, we would all benefit as society would become more knowledgable with each generation. Unfortunately, if the recent pandemic has taught us anything, it is that this is a rather idealistic portrayal. There is a lot of misinformation out there and it seems to spread more readily than actual information. Resisting misinformation is a much needed skill. And this is where a book from 1995 can still help us today!

The problem of misinformation

One of the things I want to do with this blog, is to address the issues with misinformation and pseudoscience. You might wonder why I think those are a problem so let me explain briefly. The things we believe about the world generally inform our actions. For instance, if I believe the building I’m in is on fire, I will do my best to get out as quickly as possible. If my beliefs are correct, that may just save my life. If the building is on fire but I incorrectly believe that it isn’t, I may end up dead. Or another example, closer to the current situation: if I correctly believe that a vaccination against Covid-19 will protect me and those around me, I will be more inclined to get vaccinated. The closer the things we believe align with reality, the better our decisions will generally be.

This works on a wider scale too. The more people believe true things and reject false things, the better decisions we make on a whole. For a functioning democracy, an educated, well informed public is crucial because they are most likely to make educated, well informed decisions that not only benefit themselves but others as well. This is where misinformation gets in the way and why resisting misinformation is such an important skill.

Resisting misinformation

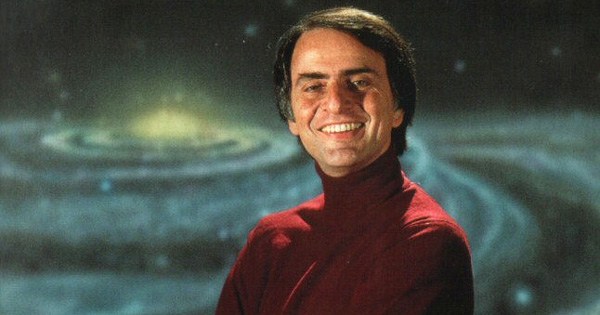

In 1995, Random House Publishing published “The demon-haunted world“, the last book Carl Sagan would ever write. In this book, with the subtitle “science as a candle in the dark”, famous astronomer, astrophysicist, astrobiologist and science communicator Sagan explains the scientific method to the general public, advocating for critical and sceptical thinking. As such, this book is just as relevant today as it was back in 1995.

Sagan offers the reader what he calls: The Baloney Detection Kit. Because I am convinced that critical and sceptical thinking is vital to our societies and ultimately to our survival, I want to share this toolkit with you now and I hope sincerely that you will use it and spread it.

The Baloney Detection Kit

- Wherever possible there must be independent confirmation of the “facts.”

If several sources report on the same event and they agree about most of the details, the reports are probably reliable. But these sources must be independent of each other. - Encourage substantive debate on the evidence by knowledgeable proponents of all points of view.

A substantive debate means that discussion is based on reality and is meaningful. It doesn’t include idle speculation or outlandish ideas. Knowledgeable proponents is also key. There’s no point listening to social media personalities for ways to combat Covid-19. Models, singers and actors aren’t doctors or virologists. - Arguments from authority carry little weight — “authorities” have made mistakes in the past. They will do so again in the future. Perhaps a better way to say it is that in science there are no authorities; at most, there are experts.

This one is perhaps difficult to grasp for many. However, it’s important to keep in mind that even the most knowledgeable people make mistakes. Even Albert Einstein, a name that’s almost synonymous with genius, made several mistakes during his career. So the argument from one person, no matter how much of an expert he/she is, should not carry much weight. It’s what you can show to be true, not who said it. - Spin more than one hypothesis. If there’s something to be explained, think of all the different ways in which it could be explained. Then think of tests by which you might systematically disprove each of the alternatives. What survives, the hypothesis that resists disproof in this Darwinian selection among “multiple working hypotheses,” has a much better chance of being the right answer than if you had simply run with the first idea that caught your fancy.

An event or fact often has more than one cause. The explanation or hypothesis you prefer, isn’t necessarily the right one. So if multiple explanations for an event could be true, it’s best to try and disprove all of them. The ones that survive this test will be all the more credible. - Try not to get overly attached to a hypothesis just because it’s yours. It’s only a way station in the pursuit of knowledge. Ask yourself why you like the idea. Compare it fairly with the alternatives. See if you can find reasons for rejecting it. If you don’t, others will.

A major difference between the scientific community and the rest of society is that people will try to prove themselves wrong instead of right. Even if you are wrong, it means you have learned something and every hypothesis eliminated brings you one step closer to a better answer. The process of peer review is tough but it leads to better understanding. - Quantify. If whatever it is you’re explaining has some measure, some numerical quantity attached to it, you’ll be much better able to discriminate among competing hypotheses. What is vague and qualitative is open to many explanations. Of course there are truths to be sought in the many qualitative issues we are obliged to confront, but finding them is more challenging.

Put simply, if you can measure it in any meaningful way: measure it! It’s much easier to compare numbers than it is to evaluate people’s often subjective arguments. - If there’s a chain of argument, every link in the chain must work (including the premise) — not just most of them.

Each argument in a chain must flow logically from the previous link, links should not be skipped and if even one link in the chain fails, the link of arguments isn’t valid. For instance, this is a valid chain: Tabby is a cat. Cats are mammals. Therefore, Tabby is a mammal. - Occam’s Razor. This convenient rule-of-thumb urges us when faced with two hypotheses that explain the data equally well to choose the simpler.

Ideally, a hypothesis that includes the least number of assumptions or steps should be preferred over one that makes many assumptions or needs many steps. Sir Isaac Newton phrased it in the following way: “We are to admit no more causes of natural things than such as are both true and sufficient to explain their appearances. Therefore, to the same natural effects we must, as far as possible, assign the same causes.” - Always ask whether the hypothesis can be, at least in principle, falsified. Propositions that are untestable, unfalsifiable are not worth much. Consider the grand idea that our Universe and everything in it is just an elementary particle — an electron, say — in a much bigger Cosmos. But if we can never acquire information from outside our Universe, is not the idea incapable of disproof? You must be able to check assertions out. Inveterate sceptics must be given the chance to follow your reasoning, to duplicate your experiments and see if they get the same result.

Simply put: if there’s no effective way to prove your hypothesis wrong, it’s a weak hypothesis. If I say there’s a dragon in my attic but that it is invisible, doesn’t require food, makes no sounds and doesn’t show up on infrared imaging, how could anyone prove the dragon is not there? There’s no reason to consider such a hypothesis. So whenever you hear someone make a statement, consider how that statement could be falsified. If it can’t, there’s little reason to accept the statement as true.

(Photo by Chinmay Singh on Pexels.com)

Resisting misinformation belongs on school curriculums

I hope that you also see value in the development of critical thinking skills. I wish that schools would focus more on how to think in their curriculum. Resisting misinformation should be taught in school, just as children learn to prove their maths. Critical thinking is also a prerequisite for developing free thought because you can use it to examine your own thinking. If you think that the Baloney Detection Kit is a valuable tool, please feel free to share this post.

Hi Joop. I have been checking back now and then on your site to see what is new. I am commenting here to what seems the most fitting place to provide this link:

https://discernreport.com/scientists-have-been-making-amazing-dna-and-soft-tissue-discoveries-that-should-completely-alter-how-we-view-the-ancient-history-of-earth/

I find it interesting that some of the discoveries cited in this article are over 30 years old. In other words, the claim that dinosaurs have been extinct for 65 million has been in question for at least 3 decades. The article includes links to sources used. For critical thinkers, there are some very compelling questions that should lead an inquiring mind to look further. I also found this other link to a conversation on the subject:

https://youtu.be/ykwgE9MlNCs

Some may not like these sources and would write them off as misinformation sources, but evidence is evidence. After reading this article and watching this video, my son brought me a textbook that declares these same evidences as being in conflict with the 65 million year old dinosaur claims. The theories don’t fit the findings.

How is it that the so-called science community continues to deny what is glaringly obvious and refuses to question the validity of a dating system that is irrelevant along with the plethora of theories being used to prop up this house cards. Sounds more like powerful religious zealots attacking the truth to maintain their agenda at all cost.

Hi Dave, thanks for your comment.

There is a huge number of issues to unpack with regards to your comment but let’s start with the last paragraph.

Why draw the conclusion that there must be some kind of conspiracy at work protecting an agenda? What agenda would that be? Why would the scientific community deny findings that contradict current understanding? They don’t seem to be doing that with the findings of the James Webb telescope which is challenging our understanding of the universe in new and exciting ways.

People who claim that the scientific community doesn’t want to hear them or discuss their “theories”, are also often people who make outlandish or pseudo-scientific claims. People like Graham Hancock for instance.

That brings me to your cited sources.I’m afraid they’re questionable at best. The Discern Report definitely is. It leans far right, almost to the extreme, contains poor sourcing, seems to be espousing conspiracy theories, propaganda and unproven claims. I would take anything written there with a generous helping of salt.

As to the YouTube video, anyone who asks the question “Is Genesis history?” and comes away with any other answer than a resounding “Hell no!”, has lost a lot of credibility right of the bat. Virtually no one except the Young Earth Creationism community thinks otherwise and they are on the fringe even in Christian circles. The largest Christian denomination in the world, the Roman Catholics, has embraced the Big Bang and the theory of evolution. In creationists circles, not everyone agrees with microbiologist Kevin Anderson in the video. Here is an article by biochemist Fazale Rana, an Old Earth Creationist, who disagrees. Mr. Rana is himself a Christian apologist.

Now I am not a microbiologist or a biochemist so it’s hard for me to dispute the findings as described by these men. However, I can spot a fallacious argument when I see one. Near the end of the video, Anderson presents us with a classic black and white fallacy: either scientists have to agree that their dating mechanisms are wrong or some magical process preserved the soft tissue in the mentioned dinosaur fossils. Really? Those are the only two options? I can see at least a third option: we don’t have sufficient data yet to explain the preservation and we will continue to investigate. That doesn’t sound as exciting as a complete dismissal of all kinds of dating mechanisms from geology, chemistry, cosmology, dendrochronology, etc. But it has the benefit of being reasonable, open to new evidence, etc.

All in all, your sources being as they are and the ill-supported claims being made, I am very skeptical about anything they proclaim.